Chapter 9: Isaac ROS and VSLAM

Chapter 9: Isaac ROS and VSLAM

Objective: Implement hardware-accelerated perception pipelines using Isaac ROS.

9.1 What is Isaac ROS?

Isaac ROS is a collection of hardware-accelerated ROS 2 packages that enable developers to build high-performance robotics applications on NVIDIA hardware (e.g., Jetson platforms, RTX GPUs). It leverages NVIDIA's GPU capabilities for computationally intensive tasks like computer vision, simultaneous localization and mapping (SLAM), and AI inference.

Key features of Isaac ROS:

- GPU Acceleration: Optimized CUDA kernels for common robotics algorithms.

- Containerization: Delivered as Docker containers, ensuring easy deployment and reproducibility.

- Modular Design: Provides building blocks for various perception and navigation pipelines.

- Integration with Isaac Sim: Seamless transfer of learned policies from simulation to real robots.

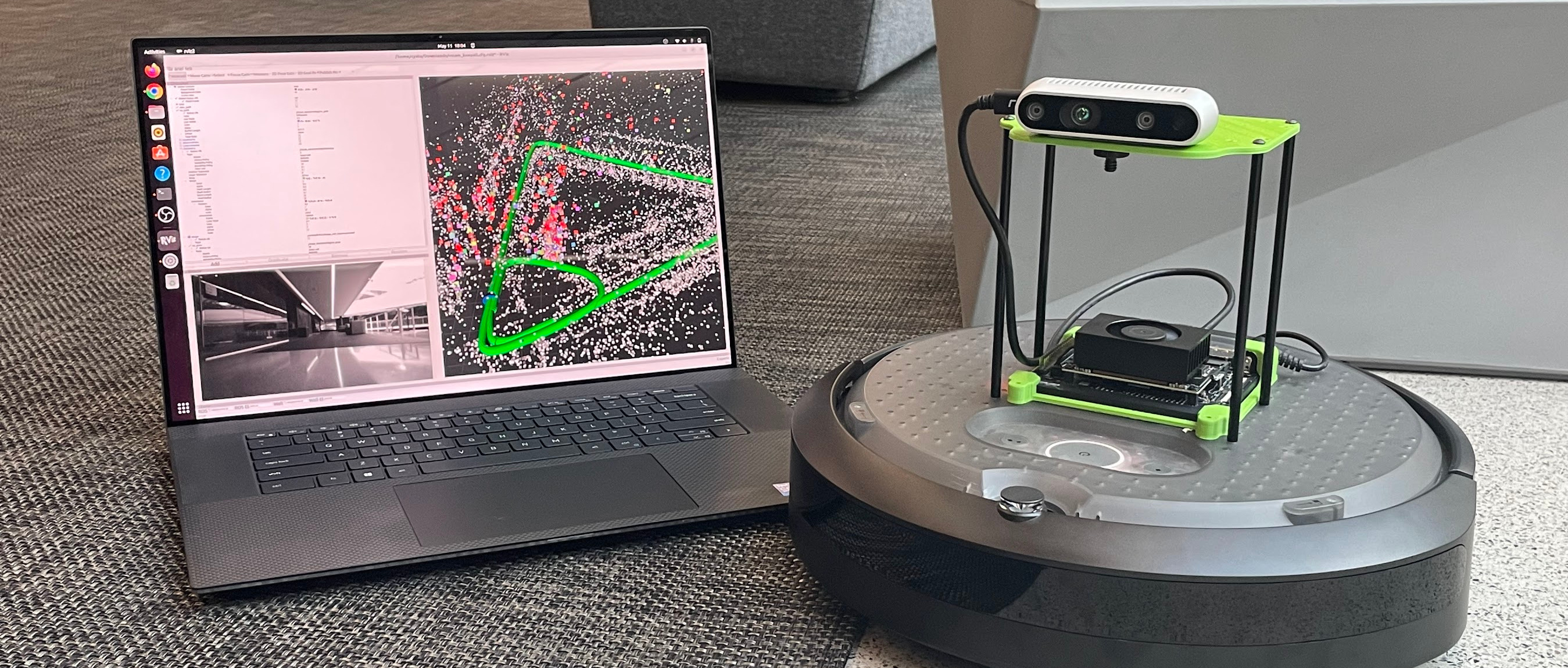

9.2 Visual SLAM (vSLAM)

SLAM (Simultaneous Localization and Mapping) is a fundamental problem in robotics: how does a robot build a map of an unknown environment while simultaneously keeping track of its own location within that map? Visual SLAM (vSLAM) uses camera feeds as its primary sensor input.

Isaac ROS provides highly optimized vSLAM packages. One prominent example is the visual_slam ROS 2 node, which implements a robust visual-inertial odometry (VIO) pipeline.

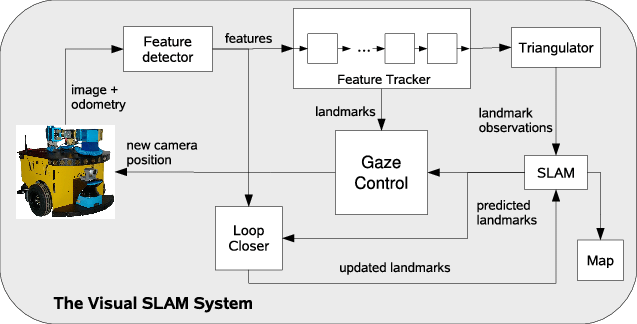

How visual_slam works (Simplified)

- Sensor Input: Takes synchronized image streams (mono/stereo) and IMU data.

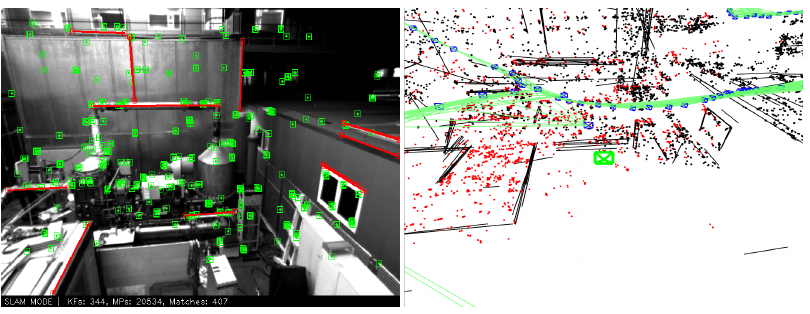

- Feature Extraction: Detects and tracks salient features across image frames.

- Pose Estimation: Estimates the camera's (and thus the robot's) 6-DOF pose relative to the environment.

- Mapping: Builds a sparse or dense map of the environment using the estimated poses.

- Loop Closure: Recognizes previously visited locations to correct accumulated errors and optimize the map and trajectory.

Integrating visual_slam in ROS 2

You typically run Isaac ROS packages inside Docker containers. An example ROS 2 launch file for visual_slam might look like this:

import os

from launch import LaunchDescription

from launch_ros.actions import Node

from launch.actions import DeclareLaunchArgument

from launch.substitutions import LaunchConfiguration

from ament_index_python.packages import get_package_share_directory

def generate_launch_description():

# Path to the visual_slam package

visual_slam_pkg_dir = get_package_share_directory('isaac_ros_visual_slam')

# Declare arguments

declare_namespace_cmd = DeclareLaunchArgument(

'namespace',

default_value='',

description='Namespace for the visual_slam node')

# visual_slam node

visual_slam_node = Node(

package='isaac_ros_visual_slam',

executable='visual_slam_node',

name='visual_slam_node',

output='screen',

namespace=LaunchConfiguration('namespace'),

parameters=[

os.path.join(visual_slam_pkg_dir, 'params', 'visual_slam_node_params.yaml'),

{'enable_imu_fusion': True,

'enable_dev_interfaces': False,

'denoise_imu_data': True,

'map_frame': 'map',

'odom_frame': 'odom',

'base_frame': 'base_link',

'camera_frame': 'camera_link',

'gyro_bias_sigma': 0.0001,

'accel_bias_sigma': 0.001}

],

remappings=[

('image', '/camera/image_raw'),

('camera_info', '/camera/camera_info'),

('imu', '/imu/data')

]

)

return LaunchDescription([

declare_namespace_cmd,

visual_slam_node

])

9.3 Hardware Acceleration

The true power of Isaac ROS comes from its ability to leverage NVIDIA GPUs. Many algorithms are implemented using CUDA and TensorRT, enabling real-time performance even with high-resolution sensor data. This is critical for mobile robots, where latency and processing power are often limited.

Developers building with Isaac ROS benefit from:

- Lower Latency: Faster processing of sensor data.

- Higher Throughput: Ability to handle more data (e.g., higher resolution cameras, more sensors).

- Reduced CPU Load: Offloading computation from the CPU to the GPU, freeing up CPU cycles for other tasks.

9.4 Perception Pipelines

Isaac ROS provides building blocks for various perception tasks beyond SLAM. You can combine different nodes (e.g., isaac_ros_apriltag, isaac_ros_segmentation) to create custom perception pipelines. For example:

- Object Detection: Using pre-trained models or custom-trained models (often with synthetic data from Isaac Sim) to identify objects in the environment.

- Segmentation: Identifying and classifying different regions within an image.

- 3D Reconstruction: Building 3D models of the environment from sensor data.

These hardware-accelerated pipelines are essential for giving robots the "eyes" and "brains" they need to understand and interact with the physical world intelligently.